Self-training Guided Adversarial Domain Adaptation

For Thermal Imagery

Ibrahim Batuhan Akkaya1,2* Fazil Altinel1* Ugur Halici2,3

1Research Center, Aselsan Inc. 2Middle East Technical University 3NOROM Neuroscience and Neurotechnology Excellency Center

* indicates equal contribution

Abstract

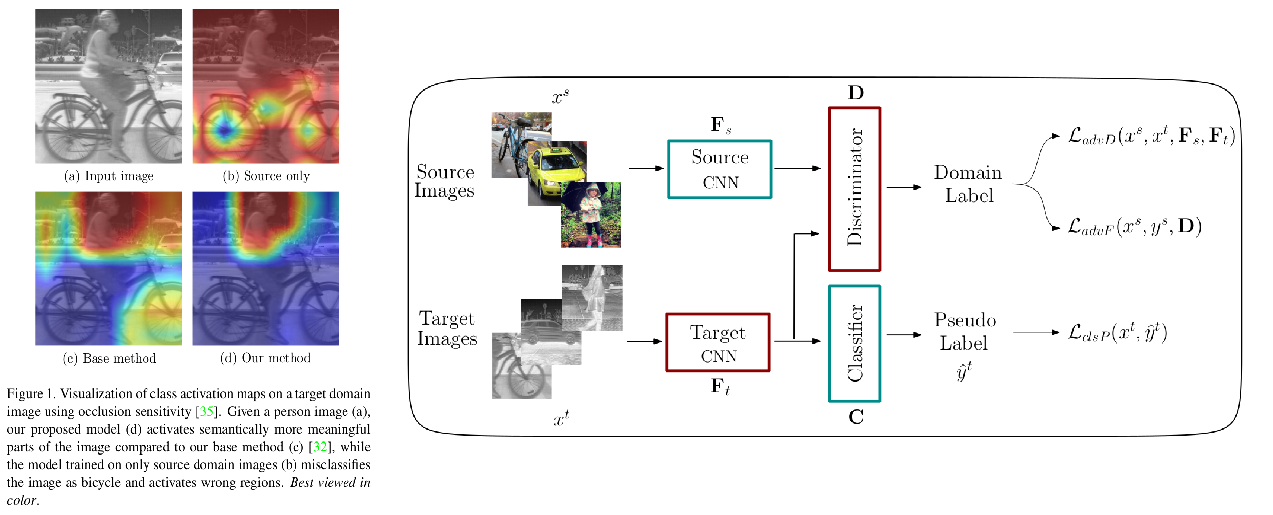

Deep models trained on large-scale RGB image datasets have shown tremendous success. It is important to apply such deep models to real-world problems. However, these models suffer from a performance bottleneck under illumination changes. Thermal IR cameras are more robust against such changes, and thus can be very useful for the real-world problems. In order to investigate efficacy of combining feature-rich visible spectrum and thermal image modalities, we propose an unsupervised domain adaptation method which does not require RGB-to-thermal image pairs. We employ large-scale RGB dataset MS-COCO as source domain and thermal dataset FLIR ADAS as target domain to demonstrate results of our method. Although adversarial domain adaptation methods aim to align the distributions of source and target domains, simply aligning the distributions cannot guarantee perfect generalization to the target domain. To this end, we propose a self-training guided adversarial domain adaptation method to promote generalization capabilities of adversarial domain adaptation methods. To perform self-training, pseudo labels are assigned to the samples on the target thermal domain to learn more generalized representations for the target domain. Extensive experimental analyses show that our proposed method achieves better results than the state-of-the-art adversarial domain adaptation methods. The code and models are publicly available.

BibTeX

@InProceedings{sgada2021,

author = {Akkaya, Ibrahim Batuhan and Altinel, Fazil and Halici, Ugur},

title = {Self-Training Guided Adversarial Domain Adaptation for Thermal Imagery},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2021},

pages = {4322-4331}

}